NEWYou can now listen to Fox News articles!

If you’ve ever wished your phone could just see what you’re dealing with instead of making you type it all out, Meta heard you. The company just launched its new AI model, Muse Spark, now powering the Meta AI assistant, and it’s rolling out across the Meta AI app, WhatsApp, Instagram, Facebook, Messenger and even its AI glasses in the coming weeks.

It’s the first major release from Meta Superintelligence Labs, a division Mark Zuckerberg founded nine months ago with one stated goal: putting “personal superintelligence” in everyone’s hands.

That’s a big promise. So let’s look at what’s actually here right now.

Sign up for my FREE CyberGuy Report

- Get my best tech tips, urgent security alerts and exclusive deals delivered straight to your inbox.

- For simple, real-world ways to spot scams early and stay protected, visit CyberGuy.com trusted by millions who watch CyberGuy on TV daily.

- Plus, you’ll get instant access to my Ultimate Scam Survival Guide free when you join.

REESE WITHERSPOON WARNS AI IS THREE TIMES MORE LIKELY TO REPLACE WOMEN

Meta says Muse Spark lets its AI assistant handle text, images and more complex reasoning in new Instant and Thinking modes. The company is positioning it as a practical upgrade for everyday tasks. (Joan Cros/NurPhoto via Getty Images)

What is Meta’s Muse Spark AI model?

Muse Spark is Meta’s foundational AI model, the first in a deliberate scaling series where each version validates and builds on the last before Meta goes bigger. The team rebuilt its AI stack from the ground up over the past nine months, making this one of the fastest development cycles the company has ever run.

The model is described as small and fast by design, yet capable enough to reason through complex questions in science, math and health. Think of it as a strong foundation rather than the ceiling. Meta has already confirmed the next generation is in development.

Right now, Muse Spark powers the Meta AI assistant across the Meta AI app and meta.ai. That’s your entry point if you want to try it today.

How Meta AI’s new modes actually work

The upgraded Meta AI now runs in two modes: Instant and Thinking. Instant handles quick questions. Thinking digs into more complex problems that need stronger reasoning. You switch between them depending on what you need.

META REPORTEDLY BUILDING AN AI VERSION OF MARK ZUCKERBERG TO INTERACT WITH COMPANY EMPLOYEES

What’s genuinely new is how it handles both at the same time. Meta AI can now launch multiple subagents in parallel. Planning a family trip to Florida? One agent drafts the itinerary, another compares Orlando to the Keys, and a third pulls up kid-friendly activities, all at the same time. You get a better, more complete answer in less time.

That’s a real shift. Most AI assistants work through tasks one at a time. Running them in parallel is closer to how a capable human research team actually operates, and honestly, it’s about time.

As Mark Zuckerberg wrote in a recent Facebook post, “We are building products that don’t just answer your questions but act as agents that do things for you.”

Meta AI can now see what you see

This is one of the most practical changes in Muse Spark. Meta built strong multimodal perception into the model, which means Meta AI can look at images rather than just read text you type.

Snap a photo of an airport snack shelf and ask which options have the most protein. Scan a product and ask how it stacks up against alternatives. The AI works with what you’re seeing, which cuts out the whole “let me describe what’s in front of me” step that makes most AI assistants feel clunky in real life.

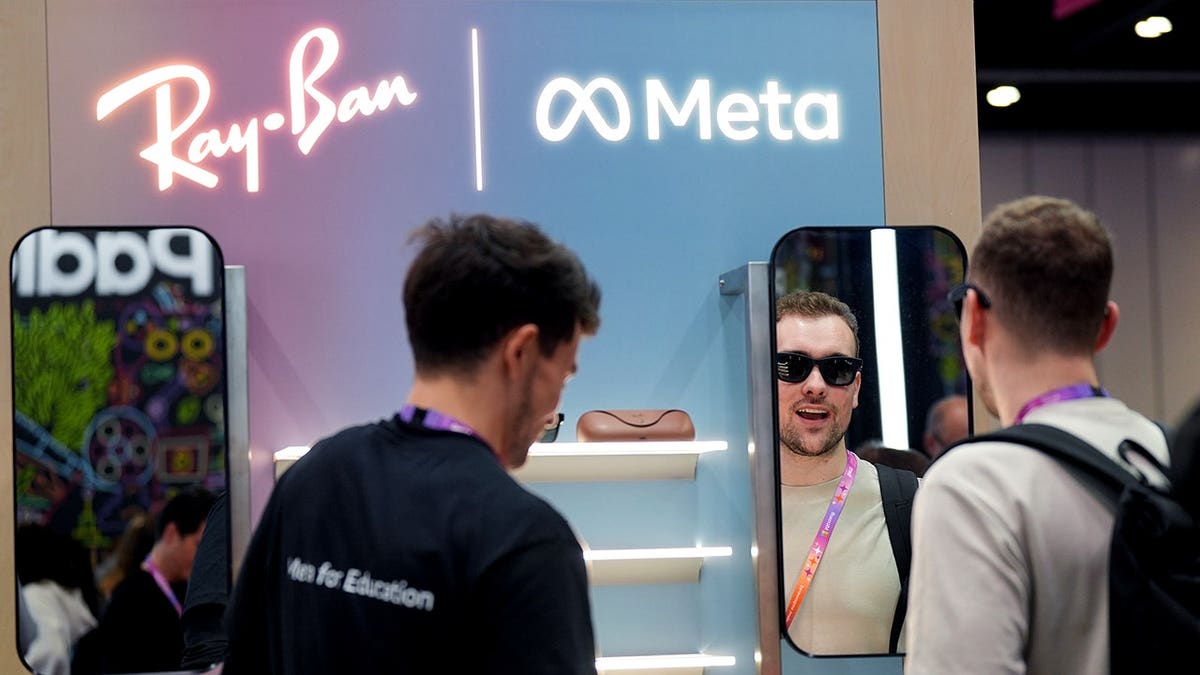

When Muse Spark rolls out to Meta’s AI glasses, this capability becomes especially interesting. The assistant will be able to see and understand your environment in real time, without you having to hold up a phone at all.

Meta is betting users will spend less time typing and more time showing AI what they see. The company says Muse Spark will soon reach apps people already use every day. (Yui Mok/PA Images via Getty Images)

How Meta AI answers health questions differently

Health is one of the top reasons people turn to AI, and Meta addressed that directly. Meta AI can now handle health questions with more detailed responses, including questions that involve images and charts.

The company worked with a team of physicians to develop the model’s ability to respond to common health questions and concerns. That doesn’t replace your doctor. But it does mean you can show Meta AI a chart from your lab results or a diagram from a health website and get a meaningful, informed response rather than a wall of disclaimers.

OPINION: SEN BERNIE SANDERS: ARTIFICIAL INTELLIGENCE IS COMING FOR THE WORKING CLASS. WE MUST FIGHT BACK

That’s actually useful. Most people have been there, squinting at a chart from their physician’s portal with zero context. Having something that can look at it with you changes the experience.

Meta AI shopping mode changes how you find products

Starting today in the U.S., the Meta AI app has a dedicated Shopping mode. It helps users figure out what to wear, style a room or find a gift for someone specific.

Rather than pulling from a generic product database, Shopping mode surfaces ideas from creators and communities already active on Facebook, Instagram and Threads. The result feels more like getting a recommendation from someone with a good eye than navigating a department store website.

That’s a meaningfully different approach, and it’s one Meta is uniquely positioned to pull off given the content ecosystem it already owns.

What this means for you

If you use Facebook, Instagram or WhatsApp regularly, Meta AI powered by Muse Spark is already on its way to you. You will not need to download anything new or hunt for it. It will show up inside the apps you already use. So what actually changes day to day?

First, you spend less time explaining things. If you have ever tried to describe a label, a chart or something confusing in front of you, this will feel like a big upgrade. Just snap a photo, ask your question and move on. No long explanations. No back and forth.

Next, planning gets easier. Trips, events or even simple decisions often mean jumping between tabs and comparing options. Meta AI now handles multiple parts of that process at once. You get a clearer answer faster, without doing five separate searches.

Shopping also starts to feel different. Right now, the new shopping mode is only available in the U.S. But it pulls ideas from real posts, creators and communities across Meta’s apps. That gives you suggestions that feel more like recommendations from people, not just search results.

And then there is what comes next. If Meta’s AI glasses have felt easy to ignore so far, that may change. When the AI can see what you see in real time, without you pulling out your phone, it starts to feel less like a feature and more like something built into your day. That is where this begins to stand out.

Muse Spark gives Meta AI new multimodal tools, including image understanding and parallel task handling for travel planning, shopping and everyday questions. Meta says more advanced versions are already in development. (Hollie Adams/Bloomberg via Getty Images)

Take my quiz: How safe is your online security?

Think your devices and data are truly protected? Take this quick quiz to see where your digital habits stand. From passwords to Wi-Fi settings, you’ll get a personalized breakdown of what you’re doing right and what needs improvement. Take my Quiz here: Cyberguy.com.

Kurt’s key takeaway

Meta is moving quickly, and Muse Spark is the first real sign that Meta Superintelligence Labs is building something that could stick. What stands out is how practical this feels. The ability to understand images, handle multiple tasks at once and respond to health questions are not features designed to just dazzle in a demo. They are built for the messy, visual, fast-moving reality of everyday life. This is not the final version. Meta already has the next generation in the works. API access is coming to select partners, and open-source models are part of the plan. Think of this as the starting point. And based on how fast Meta is moving, it may not stay “early” for long.

If an AI starts planning your trips, guiding your choices and handling tasks for you, where do you draw the line? Let us know by writing to us at CyberGuy.com.

CLICK HERE TO GET THE FOX NEWS APP

Sign up for my FREE CyberGuy Report

- Get my best tech tips, urgent security alerts and exclusive deals delivered straight to your inbox.

- For simple, real-world ways to spot scams early and stay protected, visit CyberGuy.com trusted by millions who watch CyberGuy on TV daily.

- Plus, you’ll get instant access to my Ultimate Scam Survival Guide free when you join.

Copyright 2026 CyberGuy.com. All rights reserved.